CATEGORIES:

BiologyChemistryConstructionCultureEcologyEconomyElectronicsFinanceGeographyHistoryInformaticsLawMathematicsMechanicsMedicineOtherPedagogyPhilosophyPhysicsPolicyPsychologySociologySportTourism

We Experience Our World Through Sensation

LEARNING OBJECTIVES

1. Review and summarize the capacities and limitations of human sensation.

2. Explain the difference between sensation and perception and describe how psychologists measure sensory and difference thresholds.

Sensory Thresholds: What Can We Experience?

Humans possess powerful sensory capacities that allow us to sense the kaleidoscope of sights, sounds, smells, and tastes that surround us. Our eyes detect light energy and our ears pick up sound waves. Our skin senses touch, pressure, hot, and cold. Our tongues react to the molecules of the foods we eat, and our noses detect scents in the air. The human perceptual system is wired for accuracy, and people are exceedingly good at making use of the wide variety of information available to them (Stoffregen & Bardy, 2001). [1]

In many ways our senses are quite remarkable. The human eye can detect the equivalent of a single candle flame burning 30 miles away and can distinguish among more than 300,000 different colors. The human ear can detect sounds as low as 20 hertz (vibrations per second) and as high as 20,000 hertz, and it can hear the tick of a clock about 20 feet away in a quiet room. We can taste a teaspoon of sugar dissolved in 2 gallons of water, and we are able to smell one drop of perfume diffused in a three-room apartment. We can feel the wing of a bee on our cheek dropped from 1 centimeter above (Galanter, 1962). [2]

Link

To get an idea of the range of sounds that the human ear can sense, try testing your hearing here:

http://test-my-hearing.com

Although there is much that we do sense, there is even more that we do not. Dogs, bats, whales, and some rodents all have much better hearing than we do, and many animals have a far richer sense of smell. Birds are able to see the ultraviolet light that we cannot (see Figure 4.3 "Ultraviolet Light and Bird Vision") and can also sense the pull of the earth’s magnetic field. Cats have an extremely sensitive and sophisticated sense of touch, and they are able to navigate in complete darkness using their whiskers. The fact that different organisms have different sensations is part of their evolutionary adaptation. Each species is adapted to sensing the things that are most important to them, while being blissfully unaware of the things that don’t matter.

Measuring Sensation

Psychophysics is the branch of psychology that studies the effects of physical stimuli on sensory perceptions and mental states. The field of psychophysics was founded by the German psychologist Gustav Fechner (1801–1887), who was the first to study the relationship between the strength of a stimulus and a person’s ability to detect the stimulus.

The measurement techniques developed by Fechner and his colleagues are designed in part to help determine the limits of human sensation. One important criterion is the ability to detect very faint stimuli. The absolute threshold of a sensation is defined as the intensity of a stimulus that allows an organism to just barely detect it.

In a typical psychophysics experiment, an individual is presented with a series of trials in which a signal is sometimes presented and sometimes not, or in which two stimuli are presented that are either the same or different. Imagine, for instance, that you were asked to take a hearing test. On each of the trials your task is to indicate either “yes” if you heard a sound or “no” if you did not. The signals are purposefully made to be very faint, making accurate judgments difficult.

The problem for you is that the very faint signals create uncertainty. Because our ears are constantly sending background information to the brain, you will sometimes think that you heard a sound when none was there, and you will sometimes fail to detect a sound that is there. Your task is to determine whether the neural activity that you are experiencing is due to the background noise alone or is a result of a signal within the noise.

The responses that you give on the hearing test can be analyzed using signal detection analysis. Signal detection analysis is a technique used to determine the ability of the perceiver to separate true signals from background noise (Macmillan & Creelman, 2005; Wickens, 2002). [3] As you can see in Figure 4.4 "Outcomes of a Signal Detection Analysis", each judgment trial creates four possible outcomes: A hit occurs when you, as the listener, correctly say “yes” when there was a sound. A false alarm occurs when you respond “yes” to no signal. In the other two cases you respond “no”—either amiss (saying “no” when there was a signal) or a correct rejection (saying “no” when there was in fact no signal).

Figure 4.4 Outcomes of a Signal Detection Analysis

Our ability to accurately detect stimuli is measured using a signal detection analysis. Two of the possible decisions (hits and correct rejections) are accurate; the other two (misses and false alarms) are errors.

The analysis of the data from a psychophysics experiment creates two measures. One measure, known as sensitivity, refers to the true ability of the individual to detect the presence or absence of signals. People who have better hearing will have higher sensitivity than will those with poorer hearing. The other measure, response bias, refers to a behavioral tendency to respond “yes” to the trials, which is independent of sensitivity.

Imagine for instance that rather than taking a hearing test, you are a soldier on guard duty, and your job is to detect the very faint sound of the breaking of a branch that indicates that an enemy is nearby. You can see that in this case making a false alarm by alerting the other soldiers to the sound might not be as costly as a miss (a failure to report the sound), which could be deadly. Therefore, you might well adopt a very lenient response bias in which whenever you are at all unsure, you send a warning signal. In this case your responses may not be very accurate (your sensitivity may be low because you are making a lot of false alarms) and yet the extreme response bias can save lives.

Another application of signal detection occurs when medical technicians study body images for the presence of cancerous tumors. Again, a miss (in which the technician incorrectly determines that there is no tumor) can be very costly, but false alarms (referring patients who do not have tumors to further testing) also have costs. The ultimate decisions that the technicians make are based on the quality of the signal (clarity of the image), their experience and training (the ability to recognize certain shapes and textures of tumors), and their best guesses about the relative costs of misses versus false alarms.

Although we have focused to this point on the absolute threshold, a second important criterion concerns the ability to assess differences between stimuli. The difference threshold (or just noticeable difference [JND]), refers to the change in a stimulus that can just barely be detected by the organism.The German physiologist Ernst Weber (1795–1878) made an important discovery about the JND—namely, that the ability to detect differences depends not so much on the size of the difference but on the size of the difference in relationship to the absolute size of the stimulus. Weber’s law maintains that the just noticeable difference of a stimulus is a constant proportion of the original intensity of the stimulus. As an example, if you have a cup of coffee that has only a very little bit of sugar in it (say 1 teaspoon), adding another teaspoon of sugar will make a big difference in taste. But if you added that same teaspoon to a cup of coffee that already had 5 teaspoons of sugar in it, then you probably wouldn’t taste the difference as much (in fact, according to Weber’s law, you would have to add 5 more teaspoons to make the same difference in taste).

One interesting application of Weber’s law is in our everyday shopping behavior. Our tendency to perceive cost differences between products is dependent not only on the amount of money we will spend or save, but also on the amount of money saved relative to the price of the purchase. I would venture to say that if you were about to buy a soda or candy bar in a convenience store and the price of the items ranged from $1 to $3, you would think that the $3 item cost “a lot more” than the $1 item. But now imagine that you were comparing between two music systems, one that cost $397 and one that cost $399. Probably you would think that the cost of the two systems was “about the same,” even though buying the cheaper one would still save you $2.

Research Focus: Influence without Awareness

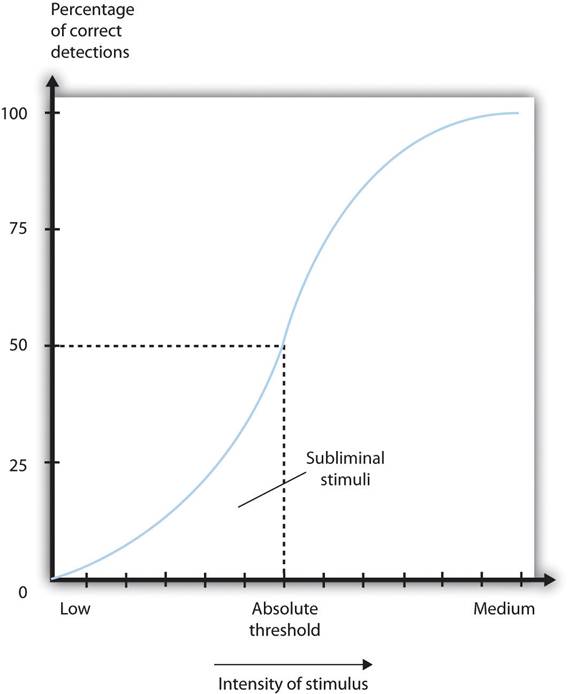

If you study Figure 4.5 "Absolute Threshold", you will see that the absolute threshold is the point where we become aware of a faint stimulus. After that point, we say that the stimulus is conscious because we can accurately report on its existence (or its nonexistence) better than 50% of the time. But can subliminal stimuli (events that occur below the absolute threshold and of which we are not conscious) have an influence on our behavior?

Figure 4.5Absolute Threshold

As the intensity of a stimulus increases, we are more likely to perceive it. Stimuli below the absolute threshold can still have at least some influence on us, even though we cannot consciously detect them.

A variety of research programs have found that subliminal stimuli can influence our judgments and behavior, at least in the short term (Dijksterhuis, 2010). [4] But whether the presentation of subliminal stimuli can influence the products that we buy has been a more controversial topic in psychology. In one relevant experiment, Karremans, Stroebe, and Claus (2006) [5] had Dutch college students view a series of computer trials in which a string of letters such as BBBBBBBBB or BBBbBBBBB were presented on the screen. To be sure they paid attention to the display, the students were asked to note whether the strings contained a small b. However, immediately before each of the letter strings, the researchers presented either the name of a drink that is popular in Holland (Lipton Ice) or a control string containing the same letters as Lipton Ice (NpeicTol). These words were presented so quickly (for only about one fiftieth of a second) that the participants could not see them.

Then the students were asked to indicate their intention to drink Lipton Ice by answering questions such as “If you would sit on a terrace now, how likely is it that you would order Lipton Ice,” and also to indicate how thirsty they were at the time. The researchers found that the students who had been exposed to the “Lipton Ice” words (and particularly those who indicated that they were already thirsty) were significantly more likely to say that they would drink Lipton Ice than were those who had been exposed to the control words.

If it were effective, procedures such as this (we can call the technique “subliminal advertising” because it advertises a product outside awareness) would have some major advantages for advertisers, because it would allow them to promote their products without directly interrupting the consumers’ activity and without the consumers’ knowing they are being persuaded. People cannot counterargue with, or attempt to avoid being influenced by, messages received outside awareness. Due to fears that people may be influenced without their knowing, subliminal advertising has been legally banned in many countries, including Australia, Great Britain, and the United States.

Although it has been proven to work in some research, subliminal advertising’s effectiveness is still uncertain. Charles Trappey (1996) [6]conducted a meta-analysis in which he combined 23 leading research studies that had tested the influence of subliminal advertising on consumer choice. The results of his meta-analysis showed that subliminal advertising had a negligible effect on consumer choice. And Saegert (1987, p. 107) [7]concluded that “marketing should quit giving subliminal advertising the benefit of the doubt,” arguing that the influences of subliminal stimuli are usually so weak that they are normally overshadowed by the person’s own decision making about the behavior.

Taken together then, the evidence for the effectiveness of subliminal advertising is weak, and its effects may be limited to only some people and in only some conditions. You probably don’t have to worry too much about being subliminally persuaded in your everyday life, even if subliminal ads are allowed in your country. But even if subliminal advertising is not all that effective itself, there are plenty of other indirect advertising techniques that are used and that do work. For instance, many ads for automobiles and alcoholic beverages are subtly sexualized, which encourages the consumer to indirectly (even if not subliminally) associate these products with sexuality. And there is the ever more frequent “product placement” techniques, where images of brands (cars, sodas, electronics, and so forth) are placed on websites and in popular television shows and movies. Harris, Bargh, & Brownell (2009) [8] found that being exposed to food advertising on television significantly increased child and adult snacking behaviors, again suggesting that the effects of perceived images, even if presented above the absolute threshold, may nevertheless be very subtle.

Another example of processing that occurs outside our awareness is seen when certain areas of the visual cortex are damaged, causing blindsight, a condition in which people are unable to consciously report on visual stimuli but nevertheless are able to accurately answer questions about what they are seeing. When people with blindsight are asked directly what stimuli look like, or to determine whether these stimuli are present at all, they cannot do so at better than chance levels. They report that they cannot see anything. However, when they are asked more indirect questions, they are able to give correct answers. For example, people with blindsight are able to correctly determine an object’s location and direction of movement, as well as identify simple geometrical forms and patterns (Weiskrantz, 1997). [9] It seems that although conscious reports of the visual experiences are not possible, there is still a parallel and implicit process at work, enabling people to perceive certain aspects of the stimuli.

KEY TAKEAWAYS

· Sensation is the process of receiving information from the environment through our sensory organs. Perception is the process of interpreting and organizing the incoming information in order that we can understand it and react accordingly.

· Transduction is the conversion of stimuli detected by receptor cells to electrical impulses that are transported to the brain.

· Although our experiences of the world are rich and complex, humans—like all species—have their own adapted sensory strengths and sensory limitations.

· Sensation and perception work together in a fluid, continuous process.

· Our judgments in detection tasks are influenced by both the absolute threshold of the signal as well as our current motivations and experiences. Signal detection analysis is used to differentiate sensitivity from response biases.

· The difference threshold, or just noticeable difference, is the ability to detect the smallest change in a stimulus about 50% of the time. According to Weber’s law, the just noticeable difference increases in proportion to the total intensity of the stimulus.

· Research has found that stimuli can influence behavior even when they are presented below the absolute threshold (i.e., subliminally). The effectiveness of subliminal advertising, however, has not been shown to be of large magnitude.

EXERCISES AND CRITICAL THINKING

1. The accidental shooting of one’s own soldiers (friendly fire) frequently occurs in wars. Based on what you have learned about sensation, perception, and psychophysics, why do you think soldiers might mistakenly fire on their own soldiers?

2. If we pick up two letters, one that weighs 1 ounce and one that weighs 2 ounces, we can notice the difference. But if we pick up two packages, one that weighs 3 pounds 1 ounce and one that weighs 3 pounds 2 ounces, we can’t tell the difference. Why?

3. Take a moment and lie down quietly in your bedroom. Notice the variety and levels of what you can see, hear, and feel. Does this experience help you understand the idea of the absolute threshold?

[1] Stoffregen, T. A., & Bardy, B. G. (2001). On specification and the senses. Behavioral and Brain Sciences, 24(2), 195–261.

[2] Galanter, E. (1962). Contemporary Psychophysics. In R. Brown, E. Galanter, E. H. Hess, & G. Mandler (Eds.), New directions in psychology. New York, NY: Holt, Rinehart and Winston.

[3] Macmillan, N. A., & Creelman, C. D. (2005). Detection theory: A user’s guide (2nd ed). Mahwah, NJ: Lawrence Erlbaum Associates; Wickens, T. D. (2002). Elementary signal detection theory. New York, NY: Oxford University Press.

[4] Dijksterhuis, A. (2010). Automaticity and the unconscious. In S. T. Fiske, D. T. Gilbert, & G. Lindzey (Eds.), Handbook of social psychology (5th ed., Vol. 1, pp. 228–267). Hoboken, NJ: John Wiley & Sons.

[5] Karremans, J. C., Stroebe, W., & Claus, J. (2006). Beyond Vicary’s fantasies: The impact of subliminal priming and brand choice. Journal of Experimental Social Psychology, 42(6), 792–798.

[6] Trappey, C. (1996). A meta-analysis of consumer choice and subliminal advertising.Psychology and Marketing, 13, 517–530.

[7] Saegert, J. (1987). Why marketing should quit giving subliminal advertising the benefit of the doubt. Psychology and Marketing, 4(2), 107–120.

[8] Harris, J. L., Bargh, J. A., & Brownell, K. D. (2009). Priming effects of television food advertising on eating behavior. Health Psychology, 28(4), 404–413.

[9] Weiskrantz, L. (1997). Consciousness lost and found: A neuropsychological exploration.New York, NY: Oxford University Press.

Seeing

LEARNING OBJECTIVES

1. Identify the key structures of the eye and the role they play in vision.

2. Summarize how the eye and the visual cortex work together to sense and perceive the visual stimuli in the environment, including processing colors, shape, depth, and motion.

Whereas other animals rely primarily on hearing, smell, or touch to understand the world around them, human beings rely in large part on vision. A large part of our cerebral cortex is devoted to seeing, and we have substantial visual skills. Seeing begins when light falls on the eyes, initiating the process of transduction. Once this visual information reaches the visual cortex, it is processed by a variety of neurons that detect colors, shapes, and motion, and that create meaningful perceptions out of the incoming stimuli.

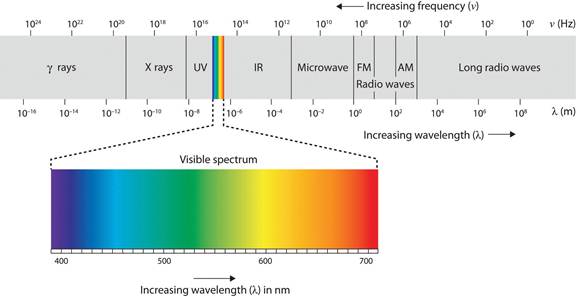

The air around us is filled with a sea of electromagnetic energy; pulses of energy waves that can carry information from place to place. As you can see in Figure 4.6 "The Electromagnetic Spectrum", electromagnetic waves vary in their wavelength—the distance between one wave peak and the next wave peak, with the shortest gamma waves being only a fraction of a millimeter in length and the longest radio waves being hundreds of kilometers long. Humans are blind to almost all of this energy—our eyes detect only the range from about 400 to 700 billionths of a meter, the part of the electromagnetic spectrum known as the visible spectrum.

Figure 4.6 The Electromagnetic Spectrum

Only a small fraction of the electromagnetic energy that surrounds us (the visible spectrum) is detectable by the human eye.

The Sensing Eye and the Perceiving Visual Cortex

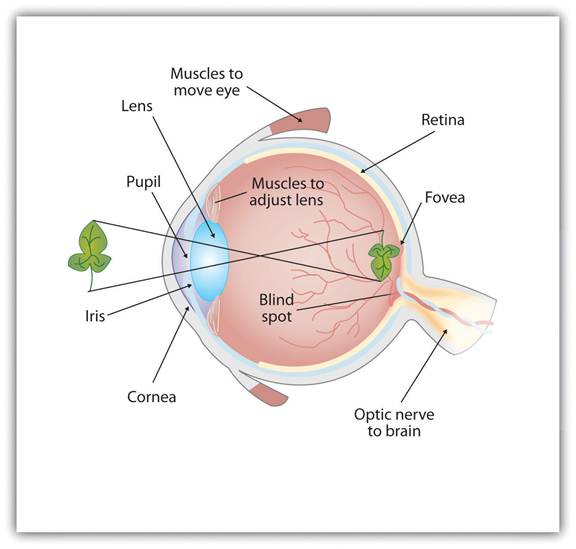

As you can see in Figure 4.7 "Anatomy of the Human Eye", light enters the eye through the cornea, a clear covering that protects the eye and begins to focus the incoming light. The light then passes through the pupil, a small opening in the center of the eye. The pupil is surrounded by the iris, the colored part of the eye that controls the size of the pupil by constricting or dilating in response to light intensity. When we enter a dark movie theater on a sunny day, for instance, muscles in the iris open the pupil and allow more light to enter. Complete adaptation to the dark may take up to 20 minutes.

Behind the pupil is the lens, a structure that focuses the incoming light on the retina, the layer of tissue at the back of the eye that contains photoreceptor cells. As our eyes move from near objects to distant objects, a process known as visual accommodation occurs. Visual accommodation is the process of changing the curvature of the lens to keep the light entering the eye focused on the retina. Rays from the top of the image strike the bottom of the retina and vice versa, and rays from the left side of the image strike the right part of the retina and vice versa, causing the image on the retina to be upside down and backward. Furthermore, the image projected on the retina is flat, and yet our final perception of the image will be three dimensional.

Figure 4.7 Anatomy of the Human Eye

Light enters the eye through the transparent cornea, passing through the pupil at the center of the iris. The lens adjusts to focus the light on the retina, where it appears upside down and backward. Receptor cells on the retina send information via the optic nerve to the visual cortex.

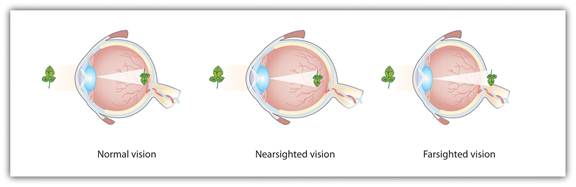

Accommodation is not always perfect, and in some cases the light that is hitting the retina is a bit out of focus. As you can see in Figure 4.8 "Normal, Nearsighted, and Farsighted Eyes", if the focus is in front of the retina, we say that the person is nearsighted, and when the focus is behind the retina we say that the person is farsighted. Eyeglasses and contact lenses correct this problem by adding another lens in front of the eye, and laser eye surgery corrects the problem by reshaping the eye’s own lens.

Figure 4.8 Normal, Nearsighted, and Farsighted Eyes

For people with normal vision (left), the lens properly focuses incoming light on the retina. For people who are nearsighted (center), images from far objects focus too far in front of the retina, whereas for people who are farsighted (right), images from near objects focus too far behind the retina. Eyeglasses solve the problem by adding a secondary, corrective, lens.

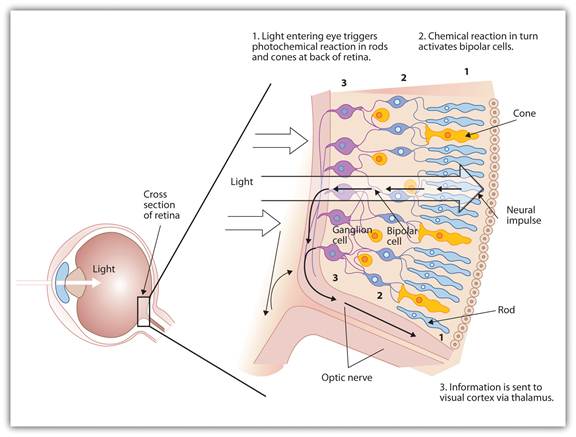

The retina contains layers of neurons specialized to respond to light (see Figure 4.9 "The Retina With Its Specialized Cells"). As light falls on the retina, it first activates receptor cells known as rods and cones. The activation of these cells then spreads to the bipolar cells and then to the ganglion cells, which gather together and converge, like the strands of a rope, forming the optic nerve. The optic nerve is a collection of millions of ganglion neurons that sends vast amounts of visual information, via the thalamus, to the brain. Because the retina and the optic nerve are active processors and analyzers of visual information, it is not inappropriate to think of these structures as an extension of the brain itself.

Figure 4.9 The Retina With Its Specialized Cells

When light falls on the retina, it creates a photochemical reaction in the rods and cones at the back of the retina. The reactions then continue to the bipolar cells, the ganglion cells, and eventually to the optic nerve.

Rods are visual neurons that specialize in detecting black, white, and gray colors. There are about 120 million rods in each eye. The rods do not provide a lot of detail about the images we see, but because they are highly sensitive to shorter-waved (darker) and weak light, they help us see in dim light, for instance, at night. Because the rods are located primarily around the edges of the retina, they are particularly active in peripheral vision (when you need to see something at night, try looking away from what you want to see). Cones are visual neurons that are specialized in detecting fine detail and colors. The 5 million or so cones in each eye enable us to see in color, but they operate best in bright light. The cones are located primarily in and around the fovea, which is the central point of the retina.

To demonstrate the difference between rods and cones in attention to detail, choose a word in this text and focus on it. Do you notice that the words a few inches to the side seem more blurred? This is because the word you are focusing on strikes the detail-oriented cones, while the words surrounding it strike the less-detail-oriented rods, which are located on the periphery.

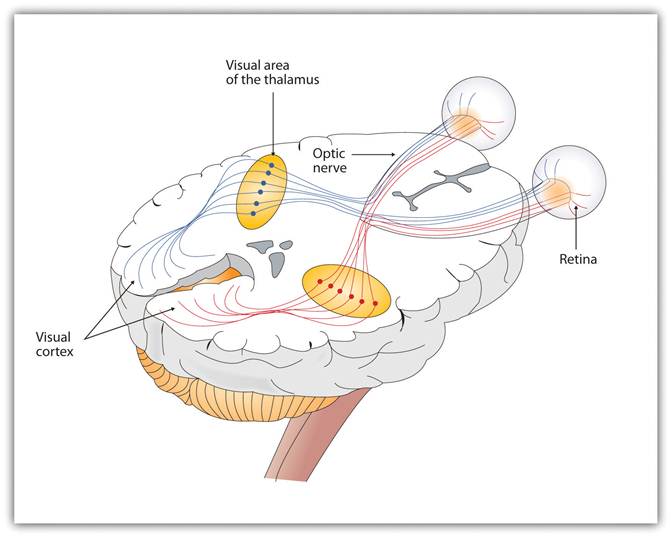

As you can see in Figure 4.11 "Pathway of Visual Images Through the Thalamus and Into the Visual Cortex", the sensory information received by the retina is relayed through the thalamus to corresponding areas in the visual cortex, which is located in the occipital lobe at the back of the brain. Although the principle of contralateral control might lead you to expect that the left eye would send information to the right brain hemisphere and vice versa, nature is smarter than that. In fact, the left and right eyes each send information to both the left and the right hemisphere, and the visual cortex processes each of the cues separately and in parallel. This is an adaptational advantage to an organism that loses sight in one eye, because even if only one eye is functional, both hemispheres will still receive input from it.

Figure 4.11 Pathway of Visual Images Through the Thalamus and Into the Visual Cortex

The left and right eyes each send information to both the left and the right brain hemisphere.

The visual cortex is made up of specialized neurons that turn the sensations they receive from the optic nerve into meaningful images. Because there are no photoreceptor cells at the place where the optic nerve leaves the retina, a hole or blind spot in our vision is created (see Figure 4.12 "Blind Spot Demonstration"). When both of our eyes are open, we don’t experience a problem because our eyes are constantly moving, and one eye makes up for what the other eye misses. But the visual system is also designed to deal with this problem if only one eye is open—the visual cortex simply fills in the small hole in our vision with similar patterns from the surrounding areas, and we never notice the difference. The ability of the visual system to cope with the blind spot is another example of how sensation and perception work together to create meaningful experience.

Figure 4.12 Blind Spot Demonstration

You can get an idea of the extent of your blind spot (the place where the optic nerve leaves the retina) by trying this demonstration. Close your left eye and stare with your right eye at the cross in the diagram. You should be able to see the elephant image to the right (don’t look at it, just notice that it is there). If you can’t see the elephant, move closer or farther away until you can. Now slowly move so that you are closer to the image while you keep looking at the cross. At one distance (probably a foot or so), the elephant will completely disappear from view because its image has fallen on the blind spot.

Perception is created in part through the simultaneous action of thousands of feature detector neurons—specialized neurons, located in the visual cortex, that respond to the strength, angles, shapes, edges, and movements of a visual stimulus (Kelsey, 1997; Livingstone & Hubel, 1988). [2] The feature detectors work in parallel, each performing a specialized function. When faced with a red square, for instance, the parallel line feature detectors, the horizontal line feature detectors, and the red color feature detectors all become activated. This activation is then passed on to other parts of the visual cortex where other neurons compare the information supplied by the feature detectors with images stored in memory. Suddenly, in a flash of recognition, the many neurons fire together, creating the single image of the red square that we experience (Rodriguez et al., 1999). [3]

Figure 4.13 The Necker Cube

The Necker cube is an example of how the visual system creates perceptions out of sensations. We do not see a series of lines, but rather a cube. Which cube we see varies depending on the momentary outcome of perceptual processes in the visual cortex.

Some feature detectors are tuned to selectively respond to particularly important objects, for instance, faces, smiles, and other parts of the body (Downing, Jiang, Shuman, & Kanwisher, 2001; Haxby et al., 2001). [4] When researchers disrupted face recognition areas of the cortex using the magnetic pulses of transcranial magnetic stimulation (TMS), people were temporarily unable to recognize faces, and yet they were still able to recognize houses (McKone, Kanwisher, & Duchaine, 2007; Pitcher, Walsh, Yovel, & Duchaine, 2007). [5]

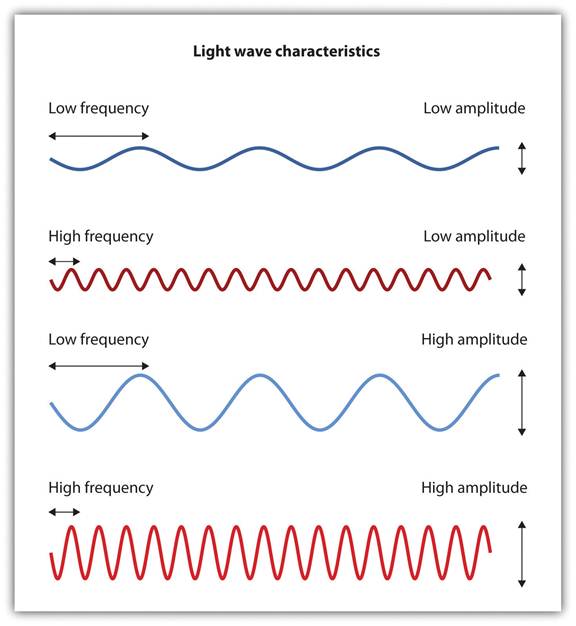

Perceiving Color

It has been estimated that the human visual system can detect and discriminate among 7 million color variations (Geldard, 1972), [6] but these variations are all created by the combinations of the three primary colors: red, green, and blue. The shade of a color, known as hue, is conveyed by the wavelength of the light that enters the eye (we see shorter wavelengths as more blue and longer wavelengths as more red), and we detect brightness from the intensity or height of the wave (bigger or more intense waves are perceived as brighter).

Figure 4.14 Low- and High-Frequency Sine Waves and Low- and High-Intensity Sine Waves and Their Corresponding Colors

Light waves with shorter frequencies are perceived as more blue than red; light waves with higher intensity are seen as brighter.

In his important research on color vision, Hermann von Helmholtz (1821–1894) theorized that color is perceived because the cones in the retina come in three types. One type of cone reacts primarily to blue light (short wavelengths), another reacts primarily to green light (medium wavelengths), and a third reacts primarily to red light (long wavelengths). The visual cortex then detects and compares the strength of the signals from each of the three types of cones, creating the experience of color. According to this Young-Helmholtz trichromatic color theory, what color we see depends on the mix of the signals from the three types of cones. If the brain is receiving primarily red and blue signals, for instance, it will perceive purple; if it is receiving primarily red and green signals it will perceive yellow; and if it is receiving messages from all three types of cones it will perceive white.

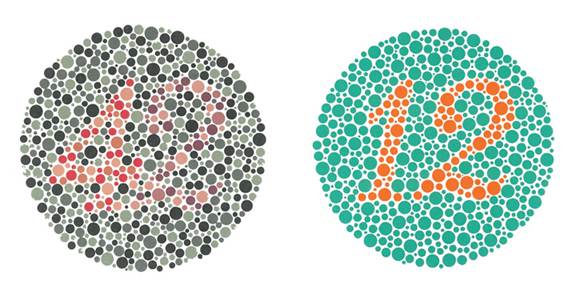

The different functions of the three types of cones are apparent in people who experience color blindness—the inability to detect either green and/or red colors. About 1 in 50 people, mostly men, lack functioning in the red- or green-sensitive cones, leaving them only able to experience either one or two colors (Figure 4.15).

Figure 4.15

People with normal color vision can see the number 42 in the first image and the number 12 in the second (they are vague but apparent). However, people who are color blind cannot see the numbers at all.

Source: Courtesy ofhttp://commons.wikimedia.org/wiki/File:Ishihara_11.PNG andhttp://commons.wikimedia.org/wiki/File:Ishihara_23.PNG.

The trichromatic color theory cannot explain all of human vision, however. For one, although the color purple does appear to us as a mixing of red and blue, yellow does not appear to be a mix of red and green. And people with color blindness, who cannot see either green or red, nevertheless can still see yellow. An alternative approach to the Young-Helmholtz theory, known as the opponent-process color theory, proposes that we analyze sensory information not in terms of three colors but rather in three sets of “opponent colors”: red-green, yellow-blue, and white-black. Evidence for the opponent-process theory comes from the fact that some neurons in the retina and in the visual cortex are excited by one color (e.g., red) but inhibited by another color (e.g., green).

One example of opponent processing occurs in the experience of an afterimage. If you stare at the flag on the left side of Figure 4.16 "U.S. Flag" for about 30 seconds (the longer you look, the better the effect), and then move your eyes to the blank area to the right of it, you will see the afterimage. When we stare at the green stripes, our green receptors habituate and begin to process less strongly, whereas the red receptors remain at full strength. When we switch our gaze, we see primarily the red part of the opponent process. Similar processes create blue after yellow and white after black.

Figure 4.16 U.S. Flag

The presence of an afterimage is best explained by the opponent-process theory of color perception. Stare at the flag for a few seconds, and then move your gaze to the blank space next to it. Do you see the afterimage?

Source: Photo courtesy of Mike Swanson,http://en.wikipedia.org/wiki/File:US_flag(inverted).svg.

The tricolor and the opponent-process mechanisms work together to produce color vision. When light rays enter the eye, the red, blue, and green cones on the retina respond in different degrees, and send different strength signals of red, blue, and green through the optic nerve. The color signals are then processed both by the ganglion cells and by the neurons in the visual cortex (Gegenfurtner & Kiper, 2003). [7]

Perceiving Form

One of the important processes required in vision is the perception of form. German psychologists in the 1930s and 1940s, including Max Wertheimer (1880–1943), Kurt Koffka (1886–1941), and Wolfgang Köhler (1887–1967), argued that we create forms out of their component sensations based on the idea of the gestalt, a meaningfully organized whole. The idea of the gestalt is that the “whole is more than the sum of its parts.” Some examples of how gestalt principles lead us to see more than what is actually there are summarized inTable 4.1 "Summary of Gestalt Principles of Form Perception".

Table 4.1 Summary of Gestalt Principles of Form Perception

| Principle | Description | Example | Image |

| Figure and ground | We structure input such that we always see a figure (image) against a ground (background). | At right, you may see a vase or you may see two faces, but in either case, you will organize the image as a figure against a ground. | Figure 4.1

|

| Similarity | Stimuli that are similar to each other tend to be grouped together. | You are more likely to see three similar columns among the XYXcharacters at right than you are to see four rows. | Figure 4.1

|

| Proximity | We tend to group nearby figures together. | Do you see four or eight images at right? Principles of proximity suggest that you might see only four. | Figure 4.1

|

| Continuity | We tend to perceive stimuli in smooth, continuous ways rather than in more discontinuous ways. | At right, most people see a line of dots that moves from the lower left to the upper right, rather than a line that moves from the left and then suddenly turns down. The principle of continuity leads us to see most lines as following the smoothest possible path. | Figure 4.1

|

| Closure | We tend to fill in gaps in an incomplete image to create a complete, whole object. | Closure leads us to see a single spherical object at right rather than a set of unrelated cones. | Figure 4.1

|

Perceiving Depth

Depth perception is the ability to perceive three-dimensional space and to accurately judge distance. Without depth perception, we would be unable to drive a car, thread a needle, or simply navigate our way around the supermarket (Howard & Rogers, 2001). [8] Research has found that depth perception is in part based on innate capacities and in part learned through experience (Witherington, 2005). [9]

Psychologists Eleanor Gibson and Richard Walk (1960) [10] tested the ability to perceive depth in 6- to 14-month-old infants by placing them on a visual cliff,a mechanism that gives the perception of a dangerous drop-off, in which infants can be safely tested for their perception of depth (Figure 4.22 "Visual Cliff"). The infants were placed on one side of the “cliff,” while their mothers called to them from the other side. Gibson and Walk found that most infants either crawled away from the cliff or remained on the board and cried because they wanted to go to their mothers, but the infants perceived a chasm that they instinctively could not cross. Further research has found that even very young children who cannot yet crawl are fearful of heights (Campos, Langer, & Krowitz, 1970). [11] On the other hand, studies have also found that infants improve their hand-eye coordination as they learn to better grasp objects and as they gain more experience in crawling, indicating that depth perception is also learned (Adolph, 2000). [12]

Depth perception is the result of our use of depth cues, messages from our bodies and the external environment that supply us with information about space and distance. Binocular depth cues are depth cues that are created by retinal image disparity—that is, the space between our eyes, and thus which require the coordination of both eyes. One outcome of retinal disparity is that the images projected on each eye are slightly different from each other. The visual cortex automatically merges the two images into one, enabling us to perceive depth. Three-dimensional movies make use of retinal disparity by using 3-D glasses that the viewer wears to create a different image on each eye. The perceptual system quickly, easily, and unconsciously turns the disparity into 3-D.

An important binocular depth cue is convergence, the inward turning of our eyes that is required to focus on objects that are less than about 50 feet away from us. The visual cortex uses the size of the convergence angle between the eyes to judge the object’s distance. You will be able to feel your eyes converging if you slowly bring a finger closer to your nose while continuing to focus on it. When you close one eye, you no longer feel the tension—convergence is a binocular depth cue that requires both eyes to work.

The visual system also uses accommodation to help determine depth. As the lens changes its curvature to focus on distant or close objects, information relayed from the muscles attached to the lens helps us determine an object’s distance. Accommodation is only effective at short viewing distances, however, so while it comes in handy when threading a needle or tying shoelaces, it is far less effective when driving or playing sports.

Although the best cues to depth occur when both eyes work together, we are able to see depth even with one eye closed. Monocular depth cues are depth cues that help us perceive depth using only one eye (Sekuler & Blake, 2006).[13] Some of the most important are summarized in Table 4.2 "Monocular Depth Cues That Help Us Judge Depth at a Distance".

Table 4.2 Monocular Depth Cues That Help Us Judge Depth at a Distance

| Name | Description | Example | Image |

| Position | We tend to see objects higher up in our field of vision as farther away. | The fence posts at right appear farther away not only because they become smaller but also because they appear higher up in the picture. |

|

| Relative size | Assuming that the objects in a scene are the same size, smaller objects are perceived as farther away. | At right, the cars in the distance appear smaller than those nearer to us. |

|

| Linear perspective | Parallel lines appear to converge at a distance. | We know that the tracks at right are parallel. When they appear closer together, we determine they are farther away. |

|

| Light and shadow | The eye receives more reflected light from objects that are closer to us. Normally, light comes from above, so darker images are in shadow. | We see the images at right as extending and indented according to their shadowing. If we invert the picture, the images will reverse. | Figure 4.2

|

| Interposition | When one object overlaps another object, we view it as closer. | At right, because the blue star covers the pink bar, it is seen as closer than the yellow moon. |

|

| Aerial perspective | Objects that appear hazy, or that are covered with smog or dust, appear farther away. | The artist who painted the picture on the right used aerial perspective to make the clouds more hazy and thus appear farther away. |

|

Perceiving Motion

Many animals, including human beings, have very sophisticated perceptual skills that allow them to coordinate their own motion with the motion of moving objects in order to create a collision with that object. Bats and birds use this mechanism to catch up with prey, dogs use it to catch a Frisbee, and humans use it to catch a moving football. The brain detects motion partly from the changing size of an image on the retina (objects that look bigger are usually closer to us) and in part from the relative brightness of objects.

We also experience motion when objects near each other change their appearance. The beta effect refers to the perception of motion that occurs when different images are presented next to each other in succession (see Note 4.43 "Beta Effect and Phi Phenomenon"). The visual cortex fills in the missing part of the motion and we see the object moving. The beta effect is used in movies to create the experience of motion. A related effect is thephi phenomenon, in which we perceive a sensation of motion caused by the appearance and disappearance of objects that are near each other. The phi phenomenon looks like a moving zone or cloud of background color surrounding the flashing objects. The beta effect and the phi phenomenon are other examples of the importance of the gestalt—our tendency to “see more than the sum of the parts.”

Beta Effect and Phi Phenomenon

In the beta effect, our eyes detect motion from a series of still images, each with the object in a different place. This is the fundamental mechanism of motion pictures (movies). In the phi phenomenon, the perception of motion is based on the momentary hiding of an image.

Phi phenomenon:http://upload.wikimedia.org/wikipedia/commons/6/6e/Lilac-Chaser.gif

Beta effect:http://upload.wikimedia.org/wikipedia/commons/0/09/Phi_phenomenom_no_watermark.gif

KEY TAKEAWAYS

· Vision is the process of detecting the electromagnetic energy that surrounds us. Only a small fraction of the electromagnetic spectrum is visible to humans.

· The visual receptor cells on the retina detect shape, color, motion, and depth.

· Light enters the eye through the transparent cornea and passes through the pupil at the center of the iris. The lens adjusts to focus the light on the retina, where it appears upside down and backward. Receptor cells on the retina are excited or inhibited by the light and send information to the visual cortex through the optic nerve.

· The retina has two types of photoreceptor cells: rods, which detect brightness and respond to black and white, and cones, which respond to red, green, and blue. Color blindness occurs when people lack function in the red- or green-sensitive cones.

· Feature detector neurons in the visual cortex help us recognize objects, and some neurons respond selectively to faces and other body parts.

· The Young-Helmholtz trichromatic color theory proposes that color perception is the result of the signals sent by the three types of cones, whereas the opponent-process color theory proposes that we perceive color as three sets of opponent colors: red-green, yellow-blue, and white-black.

· The ability to perceive depth occurs through the result of binocular and monocular depth cues.

· Motion is perceived as a function of the size and brightness of objects. The beta effect and the phi phenomenon are examples of perceived motion.

EXERCISES AND CRITICAL THINKING

1. Consider some ways that the processes of visual perception help you engage in an everyday activity, such as driving a car or riding a bicycle.

2. Imagine for a moment what your life would be like if you couldn’t see. Do you think you would be able to compensate for your loss of sight by using other senses?

[1] Livingstone M. S. (2000). Is it warm? Is it real? Or just low spatial frequency? Science, 290, 1299.

[2] Kelsey, C.A. (1997). Detection of visual information. In W. R. Hendee & P. N. T. Wells (Eds.), The perception of visual information (2nd ed.). New York, NY: Springer Verlag; Livingstone, M., & Hubel, D. (1998). Segregation of form, color, movement, and depth: Anatomy, physiology, and perception. Science, 240, 740–749.

[3] Rodriguez, E., George, N., Lachaux, J.-P., Martinerie, J., Renault, B., & Varela, F. J. (1999). Perception’s shadow: Long-distance synchronization of human brain activity. Nature, 397(6718), 430–433.

[4] Downing, P. E., Jiang, Y., Shuman, M., & Kanwisher, N. (2001). A cortical area selective for visual processing of the human body. Science, 293(5539), 2470–2473; Haxby, J. V., Gobbini, M. I., Furey, M. L., Ishai, A., Schouten, J. L., & Pietrini, P. (2001). Distributed and overlapping representations of faces and objects in ventral temporal cortex. Science, 293(5539), 2425–2430.

[5] McKone, E., Kanwisher, N., & Duchaine, B. C. (2007). Can generic expertise explain special processing for faces? Trends in Cognitive Sciences, 11, 8–15; Pitcher, D., Walsh, V., Yovel, G., & Duchaine, B. (2007). TMS evidence for the involvement of the right occipital face area in early face processing. Current Biology, 17, 1568–1573.

[6] Geldard, F. A. (1972). The human senses (2nd ed.). New York, NY: John Wiley & Sons.

[7] Gegenfurtner, K. R., & Kiper, D. C. (2003). Color vision. Annual Review of Neuroscience, 26, 181–206.

[8] Howard, I. P., & Rogers, B. J. (2001). Seeing in depth: Basic mechanisms (Vol. 1). Toronto, Ontario, Canada: Porteous.

[9] Witherington, D. C. (2005). The development of prospective grasping control between 5 and 7 months: A longitudinal study. Infancy, 7(2), 143–161.

[10] Gibson, E. J., & Walk, R. D. (1960). The “visual cliff.” Scientific American, 202(4), 64–71.

[11] Campos, J. J., Langer, A., & Krowitz, A. (1970). Cardiac responses on the visual cliff in prelocomotor human infants. Science, 170(3954), 196–197.

[12] Adolph, K. E. (2000). Specificity of learning: Why infants fall over a veritable cliff.Psychological Science, 11(4), 290–295.

[13] Sekuler, R., & Blake, R., (2006). Perception (5th ed.). New York, NY: McGraw-Hill.

4.3 Hearing

LEARNING OBJECTIVES

1. Draw a picture of the ear and label its key structures and functions, and describe the role they play in hearing.

2. Describe the process of transduction in hearing.

Like vision and all the other senses, hearing begins with transduction. Sound waves that are collected by our ears are converted into neural impulses, which are sent to the brain where they are integrated with past experience and interpreted as the sounds we experience. The human ear is sensitive to a wide range of sounds, ranging from the faint tick of a clock in a nearby room to the roar of a rock band at a nightclub, and we have the ability to detect very small variations in sound. But the ear is particularly sensitive to sounds in the same frequency as the human voice. A mother can pick out her child’s voice from a host of others, and when we pick up the phone we quickly recognize a familiar voice. In a fraction of a second, our auditory system receives the sound waves, transmits them to the auditory cortex, compares them to stored knowledge of other voices, and identifies the identity of the caller.

The Ear

Just as the eye detects light waves, the ear detects sound waves. Vibrating objects (such as the human vocal chords or guitar strings) cause air molecules to bump into each other and produce sound waves, which travel from their source as peaks and valleys much like the ripples that expand outward when a stone is tossed into a pond. Unlike light waves, which can travel in a vacuum, sound waves are carried within mediums such as air, water, or metal, and it is the changes in pressure associated with these mediums that the ear detects.

As with light waves, we detect both the wavelength and the amplitude of sound waves. The wavelength of the sound wave (known as frequency) is measured in terms of the number of waves that arrive per second and determines our perception of pitch, the perceived frequency of a sound. Longer sound waves have lower frequency and produce a lower pitch, whereas shorter waves have higher frequency and a higher pitch.

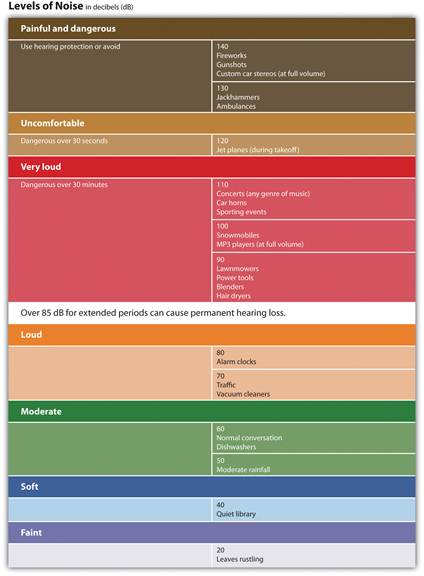

The amplitude, or height of the sound wave, determines how much energy it contains and is perceived as loudness (the degree of sound volume). Larger waves are perceived as louder. Loudness is measured using the unit of relative loudness known as the decibel. Zero decibels represent the absolute threshold for human hearing, below which we cannot hear a sound. Each increase in 10 decibels represents a tenfold increase in the loudness of the sound (see Figure 4.29 "Sounds in Everyday Life"). The sound of a typical conversation (about 60 decibels) is 1,000 times louder than the sound of a faint whisper (30 decibels), whereas the sound of a jackhammer (130 decibels) is 10 billion times louder than the whisper.

Figure 4.29 Sounds in Everyday Life

The human ear can comfortably hear sounds up to 80 decibels. Prolonged exposure to sounds above 80 decibels can cause hearing loss.

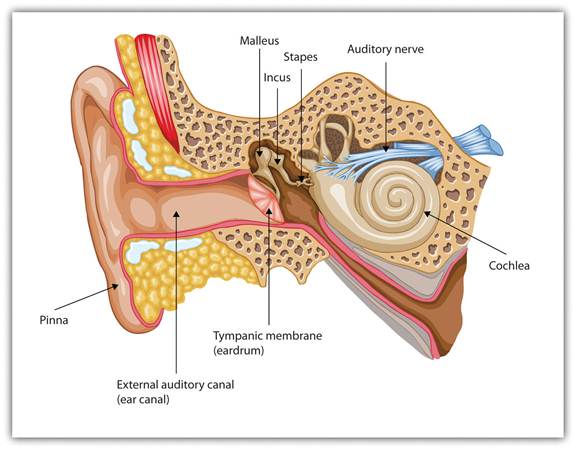

Audition begins in the pinna, the external and visible part of the ear, which is shaped like a funnel to draw in sound waves and guide them into the auditory canal. At the end of the canal, the sound waves strike the tightly stretched, highly sensitive membrane known as thetympanic membrane (or eardrum), which vibrates with the waves. The resulting vibrations are relayed into the middle ear through three tiny bones, known as the ossicles—the hammer (or malleus), anvil (or incus), and stirrup (or stapes)—to the cochlea, a snail-shaped liquid-filled tube in the inner ear. The vibrations cause the oval window, the membrane covering the opening of the cochlea, to vibrate, disturbing the fluid inside the cochlea.

The movements of the fluid in the cochlea bend the hair cells of the inner ear, much in the same way that a gust of wind bends over wheat stalks in a field. The movements of the hair cells trigger nerve impulses in the attached neurons, which are sent to the auditory nerve and then to the auditory cortex in the brain. The cochlea contains about 16,000 hair cells, each of which holds a bundle of fibers known as cilia on its tip. The cilia are so sensitive that they can detect a movement that pushes them the width of a single atom. To put things in perspective, cilia swaying at the width of an atom is equivalent to the tip of the Eiffel Tower swaying by half an inch (Corey et al., 2004). [1]

Figure 4.30 The Human Ear

Sound waves enter the outer ear and are transmitted through the auditory canal to the eardrum. The resulting vibrations are moved by the three small ossicles into the cochlea, where they are detected by hair cells and sent to the auditory nerve.

Although loudness is directly determined by the number of hair cells that are vibrating, two different mechanisms are used to detect pitch. The frequency theory of hearing proposes that whatever the pitch of a sound wave, nerve impulses of a corresponding frequency will be sent to the auditory nerve. For example, a tone measuring 600 hertz will be transduced into 600 nerve impulses a second. This theory has a problem with high-pitched sounds, however, because the neurons cannot fire fast enough. To reach the necessary speed, the neurons work together in a sort of volley system in which different neurons fire in sequence, allowing us to detect sounds up to about 4,000 hertz.

Not only is frequency important, but location is critical as well. The cochlea relays information about the specific area, or place, in the cochlea that is most activated by the incoming sound. The place theory of hearing proposes that different areas of the cochlea respond to different frequencies. Higher tones excite areas closest to the opening of the cochlea (near the oval window). Lower tones excite areas near the narrow tip of the cochlea, at the opposite end. Pitch is therefore determined in part by the area of the cochlea firing the most frequently.

Just as having two eyes in slightly different positions allows us to perceive depth, so the fact that the ears are placed on either side of the head enables us to benefit from stereophonic, or three-dimensional, hearing. If a sound occurs on your left side, the left ear will receive the sound slightly sooner than the right ear, and the sound it receives will be more intense, allowing you to quickly determine the location of the sound. Although the distance between our two ears is only about 6 inches, and sound waves travel at 750 miles an hour, the time and intensity differences are easily detected (Middlebrooks & Green, 1991). [2] When a sound is equidistant from both ears, such as when it is directly in front, behind, beneath or overhead, we have more difficulty pinpointing its location. It is for this reason that dogs (and people, too) tend to cock their heads when trying to pinpoint a sound, so that the ears receive slightly different signals.

Hearing Loss

More than 31 million Americans suffer from some kind of hearing impairment (Kochkin, 2005). [3] Conductive hearing loss is caused by physical damage to the ear (such as to the eardrums or ossicles) that reduce the ability of the ear to transfer vibrations from the outer ear to the inner ear. Sensorineural hearing loss, which is caused by damage to the cilia or to the auditory nerve, is less common overall but frequently occurs with age (Tennesen, 2007). [4] The cilia are extremely fragile, and by the time we are 65 years old, we will have lost 40% of them, particularly those that respond to high-pitched sounds (Chisolm, Willott, & Lister, 2003). [5]

Prolonged exposure to loud sounds will eventually create sensorineural hearing loss as the cilia are damaged by the noise. People who constantly operate noisy machinery without using appropriate ear protection are at high risk of hearing loss, as are people who listen to loud music on their headphones or who engage in noisy hobbies, such as hunting or motorcycling. Sounds that are 85 decibels or more can cause damage to your hearing, particularly if you are exposed to them repeatedly. Sounds of more than 130 decibels are dangerous even if you are exposed to them infrequently. People who experience tinnitus (a ringing or a buzzing sensation) after being exposed to loud sounds have very likely experienced some damage to their cilia. Taking precautions when being exposed to loud sound is important, as cilia do not grow back.

While conductive hearing loss can often be improved through hearing aids that amplify the sound, they are of little help to sensorineural hearing loss. But if the auditory nerve is still intact, a cochlear implant may be used. A cochlear implant is a device made up of a series of electrodes that are placed inside the cochlea. The device serves to bypass the hair cells by stimulating the auditory nerve cells directly. The latest implants utilize place theory, enabling different spots on the implant to respond to different levels of pitch. The cochlear implant can help children hear who would normally be deaf, and if the device is implanted early enough, these children can frequently learn to speak, often as well as normal children do (Dettman, Pinder, Briggs, Dowell, & Leigh, 2007; Dorman & Wilson, 2004). [6]

KEY TAKEAWAYS

· Sound waves vibrating through mediums such as air, water, or metal are the stimulus energy that is sensed by the ear.

· The hearing system is designed to assess frequency (pitch) and amplitude (loudness).

· Sound waves enter the outer ear (the pinna) and are sent to the eardrum via the auditory canal. The resulting vibrations are relayed by the three ossicles, causing the oval window covering the cochlea to vibrate. The vibrations are detected by the cilia (hair cells) and sent via the auditory nerve to the auditory cortex.

· There are two theories as to how we perceive pitch: The frequency theory of hearing suggests that as a sound wave’s pitch changes, nerve impulses of a corresponding frequency enter the auditory nerve. The place theory of hearing suggests that we hear different pitches because different areas of the cochlea respond to higher and lower pitches.

· Conductive hearing loss is caused by physical damage to the ear or eardrum and may be improved by hearing aids or cochlear implants. Sensorineural hearing loss, caused by damage to the hair cells or auditory nerves in the inner ear, may be produced by prolonged exposure to sounds of more than 85 decibels.

EXERCISE AND CRITICAL THINKING

1. Given what you have learned about hearing in this chapter, are you engaging in any activities that might cause long-term hearing loss? If so, how might you change your behavior to reduce the likelihood of suffering damage?

[1] Corey, D. P., García-Añoveros, J., Holt, J. R., Kwan, K. Y., Lin, S.-Y., Vollrath, M. A., Amalfitano, A.,…Zhang, D.-S. (2004). TRPA1 is a candidate for the mechano-sensitive transduction channel of vertebrate hair cells. Nature, 432, 723–730. Retrieved fromhttp://www.nature.com/nature/journal/v432/n7018/full/nature03066.html

[2] Middlebrooks, J. C., & Green, D. M. (1991). Sound localization by human listeners.Annual Review of Psychology, 42, 135–159.

[3] Kochkin, S. (2005). MarkeTrak VII: Hearing loss population tops 31 million people.Hearing Review, 12(7) 16–29.

[4] Tennesen, M. (2007, March 10). Gone today, hear tomorrow. New Scientist, 2594, 42–45.

[5] Chisolm, T. H., Willott, J. F., & Lister, J. J. (2003). The aging auditory system: Anatomic and physiologic changes and implications for rehabilitation. International Journal of Audiology, 42(Suppl. 2), 2S3–2S10.

[6] Dettman, S. J., Pinder, D., Briggs, R. J. S., Dowell, R. C., & Leigh, J. R. (2007). Communication development in children who receive the cochlear implant younger than 12 months: Risk versus benefits. Ear and Hearing, 28(2, Suppl.), 11S–18S; Dorman, M. F., & Wilson, B. S. (2004). The design and function of cochlear implants. American Scientist, 92, 436–445.

4.4 Tasting, Smelling, and Touching

LEARNING OBJECTIVES

1. Summarize how the senses of taste and olfaction transduce stimuli into perceptions.

2. Describe the process of transduction in the senses of touch and proprioception.

3. Outline the gate control theory of pain. Explain why pain matters and how it may be controlled.

Although vision and hearing are by far the most important, human sensation is rounded out by four other senses, each of which provides an essential avenue to a better understanding of and response to the world around us. These other senses are touch, taste, smell

Date: 2015-01-29; view: 4798

| <== previous page | | | next page ==> |

| Sensing and Perceiving | | | Accuracy and Inaccuracy in Perception |