CATEGORIES:

BiologyChemistryConstructionCultureEcologyEconomyElectronicsFinanceGeographyHistoryInformaticsLawMathematicsMechanicsMedicineOtherPedagogyPhilosophyPhysicsPolicyPsychologySociologySportTourism

Concepts and Terminology

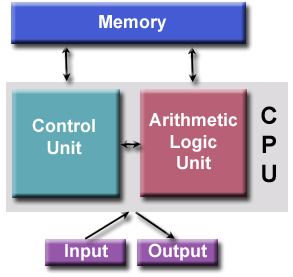

von Neumann Architecture

* Named after the Hungarian mathematician John von Neumann who first authored the general requirements for an electronic computer in his 1945 papers.

* Since then, virtually all computers have followed this basic design, which differed from earlier computers programmed through "hard wiring".

4 main components:

1. Memory

2. Control Unit

3. Arithmetic Logic Unit

4.Input/Output

* Read/write, random access memory is used to store both program instructions and data

o Program instructions are coded data which tell the computer to do something

o Data is simply information to be used by the program

* Control unit fetches instructions/data from memory, decodes the instructions and then sequentially coordinates operations to accomplish the programmed task.

* Aritmetic Unit performs basic arithmetic operations

* Input/Output is the interface to the human operator

Flynn's Classical Taxonomy

There are different ways to classify parallel computers. One of the more widely used classifications, in use since 1966, is called Flynn's Taxonomy.

Flynn's taxonomy distinguishes multi-processor computer architectures according to how they can be classified along the two independent dimensions of Instruction and Data. Each of these dimensions can have only one of two possible states: Single or Multiple.

There are 4 possible classifications according to Flynn:

SISD

SIMD

MISD

MIMD

Flynn's Classical Taxonomy-SISD

Single Instruction, Single Data (SISD):

* A serial (non-parallel) computer

* Single instruction: only one instruction stream is being acted on by the CPU during any one clock cycle

* Single data: only one data stream is being used as input during any one clock cycle

* Deterministic execution

* This is the oldest and even today, the most common type of computer

* Examples: older generation mainframes, minicomputers and workstations; most modern day PCs.

SIMD

Single Instruction, Multiple Data (SIMD):

* A type of parallel computer

* Single instruction: All processing units execute the same instruction at any given clock cycle

* Multiple data: Each processing unit can operate on a different data element

* Best suited for specialized problems characterized by a high degree of regularity, such as graphics/image processing.

* Synchronous (lockstep) and deterministic execution

* Two varieties: Processor Arrays and Vector Pipelines

* Examples:

o Processor Arrays: Connection Machine CM-2, MasPar MP-1 & MP-2, ILLIAC IV

o Vector Pipelines: IBM 9000, Cray X-MP, Y-MP & C90, Fujitsu VP, NEC SX-2, Hitachi S820, ETA10

* Most modern computers, particularly those with graphics processor units (GPUs) employ SIMD instructions and execution units.

Multiple Instruction, Single Data (MISD):

* A single data stream is fed into multiple processing units.

* Each processing unit operates on the data independently via independent instruction streams.

* Few actual examples of this class of parallel computer have ever existed. One is the experimental Carnegie-Mellon C.mmp computer (1971).

* Some conceivable uses might be:

o multiple frequency filters operating on a single signal stream

o multiple cryptography algorithms attempting to crack a single coded message.

Multiple Instruction, Multiple Data (MIMD)

* Currently, the most common type of parallel computer. Most modern computers fall into this category.

* Multiple Instruction: every processor may be executing a different instruction stream

* Multiple Data: every processor may be working with a different data stream

* Execution can be synchronous or asynchronous, deterministic or non-deterministic

* Examples: most current supercomputers, networked parallel computer clusters and "grids", multi-processor SMP computers, multi-core PCs.

* Note: many MIMD architectures also include SIMD execution sub-components

Date: 2016-03-03; view: 1322

| <== previous page | | | next page ==> |

| Why use Parallel computing? | | | Some General Parallel Terminology |