CATEGORIES:

BiologyChemistryConstructionCultureEcologyEconomyElectronicsFinanceGeographyHistoryInformaticsLawMathematicsMechanicsMedicineOtherPedagogyPhilosophyPhysicsPolicyPsychologySociologySportTourism

Computer Science

| I | INTRODUCTION |

Computer Scienceis the study of the theory, experimentation, and engineering that form the basis for the design and use of computers—devices that automatically process information. Computer science traces its roots to work done by English mathematician Charles Babbage, who first proposed a programmable mechanical calculator in 1837. Until the advent of electronic digital computers in the 1940s, computer science was not generally distinguished as being separate from mathematics and engineering. Since then it has sprouted numerous branches of research that are unique to the discipline.

| I | MAJOR BRANCHES OF COMPUTER SCIENCE |

Computer science can be divided into four main fields: software development, computer architecture (hardware), human-computer interfacing (the design of the most efficient ways for humans to use computers), and artificial intelligence (the attempt to make computers behave intelligently). Software development is concerned with creating computer programs that perform efficiently. Computer architecture is concerned with developing optimal hardware for specific computational needs. The areas of artificial intelligence (AI) and human-computer interfacing often involve the development of both software and hardware to solve specific problems.

| A | SoftwareDevelopment |

In developing computer software, computer scientists and engineers study various areas and techniques of software design, such as the best types of programming languages and algorithms (see below) to use in specific programs, how to efficiently store and retrieve information, and the computational limits of certain software-computer combinations. Software designers must consider many factors when developing a program. Often, program performance in one area must be sacrificed for the sake of the general performance of the software. For instance, since computers have only a limited amount of memory, software designers must limit the number of features they include in a program so that it will not require more memory than the system it is designed for can supply.

Software engineering is an area of software development in which computer scientists and engineers study methods and tools that facilitate the efficient development of correct, reliable, and robust computer programs. Research in this branch of computer science considers all the phases of the software life cycle, which begins with a formal problem specification, and progresses to the design of a solution, its implementation as a program, testing of the program, and program maintenance. Software engineers develop software tools and collections of tools called programming environments to improve the development process. For example, tools can help to manage the many components of a large program that is being written by a team of programmers.

Algorithms and data structures are the building blocks of computer programs. An algorithm is a precise step-by-step procedure for solving a problem within a finite time and using a finite amount of memory. Common algorithms include searching a collection of data, sorting data, and numerical operations such as matrix multiplication. Data structures are patterns for organizing information, and often represent relationships between data values. Some common data structures are called lists, arrays, records, stacks, queues, and trees.

Computer scientists continue to develop new algorithms and data structures to solve new problems and improve the efficiency of existing programs. One area of theoretical research is called algorithmic complexity. Computer scientists in this field seek to develop techniques for determining the inherent efficiency of algorithms with respect to one another. Another area of theoretical research called computability theory seeks to identify the inherent limits of computation.

Software engineers use programming languages to communicate algorithms to a computer. Natural languages such as English are ambiguous—meaning that their grammatical structure and vocabulary can be interpreted in multiple ways—so they are not suited for programming. Instead, simple and unambiguous artificial languages are used. Computer scientists study ways of making programming languages more expressive, thereby simplifying programming and reducing errors. A program written in a programming language must be translated into machine language (the actual instructions that the computer follows). Computer scientists also develop better translation algorithms that produce more efficient machine language programs.

Databases and information retrieval are related fields of research. A database is an organized collection of information stored in a computer, such as a company’s customer account data. Computer scientists attempt to make it easier for users to access databases, prevent access by unauthorized users, and improve access speed. They are also interested in developing techniques to compress the data, so that more can be stored in the same amount of memory. Databases are sometimes distributed over multiple computers that update the data simultaneously, which can lead to inconsistency in the stored information. To address this problem, computer scientists also study ways of preventing inconsistency without reducing access speed.

Information retrieval is concerned with locating data in collections that are not clearly organized, such as a file of newspaper articles. Computer scientists develop algorithms for creating indexes of the data. Once the information is indexed, techniques developed for databases can be used to organize it. Data mining is a closely related field in which a large body of information is analyzed to identify patterns. For example, mining the sales records from a grocery store could identify shopping patterns to help guide the store in stocking its shelves more effectively.

Operating systems are programs that control the overall functioning of a computer. They provide the user interface, place programs into the computer’s memory and cause it to execute them, control the computer’s input and output devices, manage the computer’s resources such as its disk space, protect the computer from unauthorized use, and keep stored data secure. Computer scientists are interested in making operating systems easier to use, more secure, and more efficient by developing new user interface designs, designing new mechanisms that allow data to be shared while preventing access to sensitive data, and developing algorithms that make more effective use of the computer’s time and memory.

The study of numerical computation involves the development of algorithms for calculations, often on large sets of data or with high precision. Because many of these computations may take days or months to execute, computer scientists are interested in making the calculations as efficient as possible. They also explore ways to increase the numerical precision of computations, which can have such effects as improving the accuracy of a weather forecast. The goals of improving efficiency and precision often conflict, with greater efficiency being obtained at the cost of precision and vice versa.

Symbolic computation involves programs that manipulate nonnumeric symbols, such as characters, words, drawings, algebraic expressions, encrypted data (data coded to prevent unauthorized access), and the parts of data structures that represent relationships between values. One unifying property of symbolic programs is that they often lack the regular patterns of processing found in many numerical computations. Such irregularities present computer scientists with special challenges in creating theoretical models of a program’s efficiency, in translating it into an efficient machine language program, and in specifying and testing its correct behavior.

Computer Program

| I | INTRODUCTION |

Computer Program, set of instructions that directs a computer to perform some processing function or combination of functions. For the instructions to be carried out, a computer must execute a program, that is, the computer reads the program, and then follows the steps encoded in the program in a precise order until completion. A program can be executed many different times, with each execution yielding a potentially different result depending upon the options and data that the user gives the computer.

Programs fall into two major classes: application programs and operating systems. An application program is one that carries out some function directly for a user, such as word processing or game-playing. An operating system is a program that manages the computer and the various resources and devices connected to it, such as RAM (random access memory), hard drives, monitors, keyboards, printers, and modems, so that they may be used by other programs. Examples of operating systems are DOS, Windows 95, OS/2, and UNIX.

| II | PROGRAM DEVELOPMENT |

Software designers create new programs by using special applications programs, often called utilityprograms or development programs. A programmer uses another type of program called a text editor to write the new program in a special notation called a programming language. With the text editor, the programmer creates a text file, which is an ordered list of instructions, also called the program source file. The individual instructions that make up the program source file are called source code. At this point, a special applications program translates the source code into machine language, or object code—a format that the operating system will recognize as a proper program and be able to execute.

Three types of applications programs translate from source code to object code: compilers, interpreters, and assemblers. The three operate differently and on different types of programming languages, but they serve the same purpose of translating from a programming language into machine language.

A compiler translates text files written in a high-level programming language—such as Fortran, C, or Pascal—from the source code to the object code all at once. This differs from the approach taken by interpreted languages such as BASIC, APL and LISP, in which a program is translated into object code statement by statement as each instruction is executed. The advantage to interpreted languages is that they can begin executing the program immediately instead of having to wait for all of the source code to be compiled. Changes can also be made to the program fairly quickly without having to wait for it to be compiled again. The disadvantage of interpreted languages is that they are slow to execute, since the entire program must be translated one instruction at a time, each time the program is run. On the other hand, compiled languages are compiled only once and thus can be executed by the computer much more quickly than interpreted languages. For this reason, compiled languages are more common and are almost always used in professional and scientific applications.

Another type of translator is the assembler, which is used for programs or parts of programs written in assembly language. Assembly language is another programming language, but it is much more similar to machine language than other types of high-level languages. In assembly language, a single statement can usually be translated into a single instruction of machine language. Today, assembly language is rarely used to write an entire program, but is instead most often used when the programmer needs to directly control some aspect of the computer’s function.

Programs are often written as a set of smaller pieces, with each piece representing some aspect of the overall application program. After each piece has been compiled separately, a program called a linker combines all of the translated pieces into a single executable program.

Programs seldom work correctly the first time, so a program called a debugger is often used to help find problems called bugs. Debugging programs usually detect an event in the executing program and point the programmer back to the origin of the event in the program code.

Recent programming systems, such as Java, use a combination of approaches to create and execute programs. A compiler takes a Java source program and translates it into an intermediate form. Such intermediate programs are then transferred over the Internet into computers where an interpreter program then executes the intermediate form as an application program.

| III | PROGRAM ELEMENTS |

Most programs are built from just a few kinds of steps that are repeated many times in different contexts and in different combinations throughout the program. The most common step performs some computation, and then proceeds to the next step in the program, in the order specified by the programmer.

Programs often need to repeat a short series of steps many times, for instance in looking through a list of game scores and finding the highest score. Such repetitive sequences of code are called loops.

One of the capabilities that makes computers so useful is their ability to make conditional decisions and perform different instructions based on the values of data being processed. If-then-else statements implement this function by testing some piece of data and then selecting one of two sequences of instructions on the basis of the result. One of the instructions in these alternatives may be a goto statement that directs the computer to select its next instruction from a different part of the program. For example, a program might compare two numbers and branch to a different part of the program depending on the result of the comparison:

If x is greater than ythen go to instruction #10 else continue

Programs often use a specific sequence of steps more than once. Such a sequence of steps can be grouped together into a subroutine, which can then be called, or accessed, as needed in different parts of the main program. Each time a subroutine is called, the computer remembers where it was in the program when the call was made, so that it can return there upon completion of the subroutine. Preceding each call, a program can specify that different data be used by the subroutine, allowing a very general piece of code to be written once and used in multiple ways.

Most programs use several varieties of subroutines. The most common of these are functions, procedures, library routines, system routines, and device drivers. Functions are short subroutines that compute some value, such as computations of angles, which the computer cannot compute with a single basic instruction. Procedures perform a more complex function, such as sorting a set of names. Library routines are subroutines that are written for use by many different programs. System routines are similar to library routines but are actually found in the operating system. They provide some service for the application programs, such as printing a line of text. Device drivers are system routines that are added to an operating system to allow the computer to communicate with a new device, such as a scanner, modem, or printer. Device drivers often have features that can be executed directly as applications programs. This allows the user to directly control the device, which is useful if, for instance, a color printer needs to be realigned to attain the best printing quality after changing an ink cartridge.

| IV | PROGRAM FUNCTION |

Modern computers usually store programs on some form of magnetic storage media that can be accessed randomly by the computer, such as the hard drive disk permanently located in the computer, or a portable floppy disk. Additional information on such disks, called directories, indicate the names of the various programs on the disk, when they were written to the disk, and where the program begins on the disk media. When a user directs the computer to execute a particular application program, the operating system looks through these directories, locates the program, and reads a copy into RAM. The operating system then directs the CPU (central processing unit) to start executing the instructions at the beginning of the program. Instructions at the beginning of the program prepare the computer to process information by locating free memory locations in RAM to hold working data, retrieving copies of the standard options and defaults the user has indicated from a disk, and drawing initial displays on the monitor.

The application program requests a copy of any information the user enters by making a call to a system routine. The operating system converts any data so entered into a standard internal form. The application then uses this information to decide what to do next—for example, perform some desired processing function such as reformatting a page of text, or obtain some additional information from another file on a disk. In either case, calls to other system routines are used to actually carry out the display of the results or the accessing of the file from the disk.

When the application reaches completion or is prompted to quit, it makes further system calls to make sure that all data that needs to be saved has been written back to disk. It then makes a final system call to the operating system indicating that it is finished. The operating system then frees up the RAM and any devices that the application was using and awaits a command from the user to start another program.

| VI | THE FUTURE |

The field of computer science has grown rapidly since the 1950s due to the increase in their use. Computer programs have undergone many changes during this time in response to user need and advances in technology. Newer ideas in computing such as parallel computing, distributed computing, and artificial intelligence, have radically altered the traditional concepts that once determined program form and function.

Computer scientists working in the field of parallel computing, in which multiple CPUs cooperate on the same problem at the same time, have introduced a number of new program models . In parallel computing parts of a problem are worked on simultaneously by different processors, and this speeds up the solution of the problem. Many challenges face scientists and engineers who design programs for parallel processing computers, because of the extreme complexity of the systems and the difficulty involved in making them operate as effectively as possible.

Another type of parallel computing called distributed computing uses CPUs from many interconnected computers to solve problems. Often the computers used to process information in a distributed computing application are connected over the Internet. Internet applications are becoming a particularly useful form of distributed computing, especially with programming languages such as Java . In such applications, a user logs onto a Web site and downloads a Java program onto their computer. When the Java program is run, it communicates with other programs at its home web site, and may also communicate with other programs running on different computers or web sites.

Research into artificial intelligence (AI) has led to several other new styles of programming. Logic programs, for example, do not consist of individual instructions for the computer to follow blindly, but instead consist of sets of rules: if x happens then do y. A special program called an inference engine uses these rules to “reason” its way to a conclusion when presented with a new problem. Applications of logic programs include automatic monitoring of complex systems, and proving mathematical theorems.

A radically different approach to computing in which there is no program in the conventional sense is called a neural network. A neural network is a group of highly interconnected simple processing elements, designed to mimic the brain. Instead of having a program direct the information processing in the way that a traditional computer does, a neural network processes information depending upon the way that its processing elements are connected. Programming a neural network is accomplished by presenting it with known patterns of input and output data and adjusting the relative importance of the interconnections between the processing elements until the desired pattern matching is accomplished. Neural networks are usually simulated on traditional computers, but unlike traditional computer programs, neural networks are able to learn from their experience.

DEBUGGER

Debugger, in computer science, a program designed to help in debugging another program by allowing the programmer to step through the program, examine data, and check conditions. There are two basic types of debuggers: machine-level and source-level. Machine-level debuggers display the actual machine instructions (disassembled into assembly language) and allow the programmer to look at registers and memory locations. Source-level debuggers let the programmer look at the original source code (C or Pascal, for example), examine variables and data structures by name, and so on.

Programming Language

| I | INTRODUCTION |

Programming Language, in computer science, artificial language used to write a sequence of instructions (a computer program) that can be run by a computer. Similar to natural languages, such as English, programming languages have a vocabulary, grammar, and syntax. However, natural languages are not suited for programming computers because they are ambiguous, meaning that their vocabulary and grammatical structure may be interpreted in multiple ways. The languages used to program computers must have simple logical structures, and the rules for their grammar, spelling, and punctuation must be precise.

Application of Programming Languages

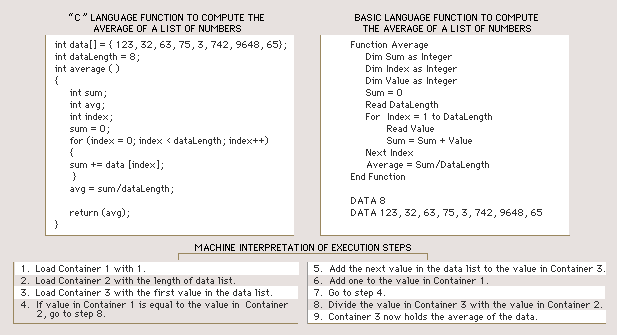

Programming languages allow people to communicate with computers. Once a job has been identified, the programmer must translate, or code, it into a list of instructions that the computer will understand. A computer program for a given task may be written in several different languages. Depending on the task, a programmer will generally pick the language that will involve the least complicated program. It may also be important to the programmer to pick a language that is flexible and widely compatible if the program will have a range of applications. The examples shown here are programs written to average a list of numbers. Both C and BASIC are commonly used programming languages. The machine interpretation shows how a computer would process and execute the commands from the programs.

Programming languages vary greatly in their sophistication and in their degree of versatility. Some programming languages are written to address a particular kind of computing problem or for use on a particular model of computer system. For instance, programming languages such as Fortran and COBOL were written to solve certain general types of programming problems—Fortran for scientific applications, and COBOL for business applications. Although these languages were designed to address specific categories of computer problems, they are highly portable, meaning that they may be used to program many types of computers. Other languages, such as machine languages, are designed to be used by one specific model of computer system, or even by one specific computer in certain research applications. The most commonly used programming languages are highly portable and can be used to effectively solve diverse types of computing problems. Languages like C, PASCAL, and BASIC fall into this category.

| II | LANGUAGE TYPES |

Programming languages can be classified as either low-level languages or high-level languages. Low-level programming languages, or machine languages, are the most basic type of programming languages and can be understood directly by a computer. Machine languages differ depending on the manufacturer and model of computer. High-level languages are programming languages that must first be translated into a machine language before they can be understood and processed by a computer. Examples of high-level languages are C, C++, PASCAL, and Fortran. Assembly languages are intermediate languages that are very close to machine language and do not have the level of linguistic sophistication exhibited by other high-level languages, but must still be translated into machine language.

| A | MachineLanguages |

In machine languages, instructions are written as sequences of 1s and 0s, called bits, that a computer can understand directly. An instruction in machine language generally tells the computer four things: (1) where to find one or two numbers or simple pieces of data in the main computer memory (Random Access Memory, or RAM), (2) a simple operation to perform, such as adding the two numbers together, (3) where in the main memory to put the result of this simple operation, and (4) where to find the next instruction to perform. While all executable programs are eventually read by the computer in machine language, they are not all programmed in machine language. It is extremely difficult to program directly in machine language because the instructions are sequences of 1s and 0s. A typical instruction in a machine language might read 10010 1100 1011 and mean add the contents of storage register A to the contents of storage register B.

| B | High-LevelLanguages |

High-level languages are relatively sophisticated sets of statements utilizing words and syntax from human language. They are more similar to normal human languages than assembly or machine languages and are therefore easier to use for writing complicated programs. These programming languages allow larger and more complicated programs to be developed faster. However, high-level languages must be translated into machine language by another program called a compiler before a computer can understand them. For this reason, programs written in a high-level language may take longer to execute and use up more memory than programs written in an assembly language.

| C | AssemblyLanguage |

Computer programmers use assembly languages to make machine-language programs easier to write. In an assembly language, each statement corresponds roughly to one machine language instruction. An assembly language statement is composed with the aid of easy to remember commands. The command to add the contents of the storage register A to the contents of storage register B might be written ADD B,A in a typical assembly language statement. Assembly languages share certain features with machine languages. For instance, it is possible to manipulate specific bits in both assembly and machine languages. Programmers use assembly languages when it is important to minimize the time it takes to run a program, because the translation from assembly language to machine language is relatively simple. Assembly languages are also used when some part of the computer has to be controlled directly, such as individual dots on a monitor or the flow of individual characters to a printer.

| III | CLASSIFICATION OF HIGH-LEVEL LANGUAGES |

High-level languages are commonly classified as procedure-oriented, functional, object-oriented, or logic languages. The most common high-level languages today are procedure-oriented languages. In these languages, one or more related blocks of statements that perform some complete function are grouped together into a program module, or procedure, and given a name such as “procedure A.” If the same sequence of operations is needed elsewhere in the program, a simple statement can be used to refer back to the procedure. In essence, a procedure is just a mini-program. A large program can be constructed by grouping together procedures that perform different tasks. Procedural languages allow programs to be shorter and easier for the computer to read, but they require the programmer to design each procedure to be general enough to be used in different situations.

Functional languages treat procedures like mathematical functions and allow them to be processed like any other data in a program. This allows a much higher and more rigorous level of program construction. Functional languages also allow variables—symbols for data that can be specified and changed by the user as the program is running—to be given values only once. This simplifies programming by reducing the need to be concerned with the exact order of statement execution, since a variable does not have to be redeclared, or restated, each time it is used in a program statement. Many of the ideas from functional languages have become key parts of many modern procedural languages.

Object-oriented languages are outgrowths of functional languages. In object-oriented languages, the code used to write the program and the data processed by the program are grouped together into units called objects. Objects are further grouped into classes, which define the attributes objects must have. A simple example of a class is the class Book. Objects within this class might be Novel and Short Story. Objects also have certain functions associated with them, called methods. The computer accesses an object through the use of one of the object’s methods. The method performs some action to the data in the object and returns this value to the computer. Classes of objects can also be further grouped into hierarchies, in which objects of one class can inherit methods from another class. The structure provided in object-oriented languages makes them very useful for complicated programming tasks.

Logic languages use logic as their mathematical base. A logic program consists of sets of facts and if-then rules, which specify how one set of facts may be deduced from others, for example:

If the statement X is true, then the statement Y is false.

In the execution of such a program, an input statement can be logically deduced from other statements in the program. Many artificial intelligence programs are written in such languages.

| IV | LANGUAGE STRUCTURE AND COMPONENTS |

Programming languages use specific types of statements, or instructions, to provide functional structure to the program. A statement in a program is a basic sentence that expresses a simple idea—its purpose is to give the computer a basic instruction. Statements define the types of data allowed, how data are to be manipulated, and the ways that procedures and functions work. Programmers use statements to manipulate common components of programming languages, such as variables and macros (mini-programs within a program).

Statements known as data declarations give names and properties to elements of a program called variables. Variables can be assigned different values within the program. The properties variables can have are called types, and they include such things as what possible values might be saved in the variables, how much numerical accuracy is to be used in the values, and how one variable may represent a collection of simpler values in an organized fashion, such as a table or array. In many programming languages, a key data type is a pointer. Variables that are pointers do not themselves have values; instead, they have information that the computer can use to locate some other variable—that is, they point to another variable.

An expression is a piece of a statement that describes a series of computations to be performed on some of the program’s variables, such as X + Y/Z, in which the variables are X, Y, and Z and the computations are addition and division. An assignment statement assigns a variable a value derived from some expression, while conditional statements specify expressions to be tested and then used to select which other statements should be executed next.

Procedure and function statements define certain blocks of code as procedures or functions that can then be returned to later in the program. These statements also define the kinds of variables and parameters the programmer can choose and the type of value that the code will return when an expression accesses the procedure or function. Many programming languages also permit minitranslation programs called macros. Macros translate segments of code that have been written in a language structure defined by the programmer into statements that the programming language understands.

FORTRAN

Fortran, in computer science, acronym for FORmulaTRANslation. The first high-level computer language (developed 1954-1958 by John Backus) and the progenitor of many key high-level concepts, such as variables, expressions, statements, iterative and conditional statements, separately compiled subroutines, and formatted input/output. Fortran is a compiled, structured language. The name indicates its scientific and engineering roots; Fortran is still used heavily in those fields, although the language itself has been expanded and improved vastly over the last 35 years to become a language that is useful in any field.

COBOL

COBOL, in computer science, acronym for COmmon Business-Oriented Language, a verbose, English-like programming language developed between 1959 and 1961. Its establishment as a required language by the U.S. Department of Defense, its emphasis on data structures, and its English-like syntax (compared to those of Fortran and ALGOL) led to its widespread acceptance and usage, especially in business applications. Programs written in COBOL, which is a compiled language, are split into four divisions: Identification, Environment, Data, and Procedure. The Identification division specifies the name of the program and contains any other documentation the programmer wants to add. The Environment division specifies the computer(s) being used and the files used in the program for input and output. The Data division describes the data used in the program. The Procedure division contains the procedures that dictate the actions of the program. See also Computer.

C (COMPUTER)

C (computer), in computer science, a programming language developed by Dennis Ritchie at Bell Laboratories in 1972; so named because its immediate predecessor was the B programming language. Although C is considered by many to be more a machine-independent assembly language than a high-level language, its close association with the UNIX operating system, its enormous popularity, and its standardization by the American National Standards Institute (ANSI) have made it perhaps the closest thing to a standard programming language in the microcomputer/workstation marketplace. C is a compiled language that contains a small set of built-in functions that are machine dependent. The rest of the C functions are machine independent and are contained in libraries that can be accessed from C programs. C programs are composed of one or more functions defined by the programmer; thus C is a structured programming language.

PASCAL (COMPUTER)

Pascal (computer), a concise procedural computer programming language, designed 1967-71 by Niklaus Wirth. Pascal, a compiled, structured language, built upon ALGOL, simplifies syntax while adding data types and structures such as subranges, enumerated data types, files, records, and sets. Acceptance and use of Pascal exploded with Borland International's introduction in 1984 of Turbo Pascal, a high-speed, low-cost Pascal compiler for MS-DOS systems that has sold over a million copies in its various versions. Even so, Pascal appears to be losing ground to C as a standard development language on microcomputers.

BASIC

BASIC, in computer science, acronym for Beginner's All-purpose Symbolic Instruction Code. A high-level programming language developed by John Kemeny and Thomas Kurtz at Dartmouth College in the mid-1960s. BASIC gained its enormous popularity mostly because of two implementations, Tiny BASIC and Microsoft BASIC, which made BASIC the first lingua franca of microcomputers. Other important implementations have been CBASIC (Compiled BASIC), Integer and Applesoft BASIC (for the Apple II), GW-BASIC (for the IBM PC), Turbo BASIC (from Borland), and Microsoft QuickBASIC. The language has changed over the years. Early versions are unstructured and interpreted. Later versions are structured and often compiled. BASIC is often taught to beginning programmers because it is easy to use and understand and because it contains the same major concepts as many other languages thought to be more difficult, such as Pascal and C.

C++

C++, in computer science, an object-oriented version of the C programming language, developed by BjarneStroustrup in the early 1980s at Bell Laboratories and adopted by a number of vendors, including Apple Computer, Sun Microsystems, Borland International, and Microsoft Corporation.

Date: 2015-02-16; view: 6665

| <== previous page | | | next page ==> |

| The History of Economic Thought | | | ASSEMBLY LANGUAGE |